Innovation and Research Methods

NFER is continually encouraging and supporting its staff to not only find imaginative uses of existing methods but to introduce innovative ideas which improve the way we work and enhance the research we produce.

Our talented team of researchers, statisticians, psychometricians and economists are progressive, forward-thinking and dynamic.

Artificial Intelligence (AI) and Machine learning

- We are continually exploring innovative ideas around how educational data can be used, including the use of artificial intelligence and machine learning. Our work on decision trees and random forests utilising the PISA 18 data has shown that machine learning methods can explain more variation than simple logistic regression analyses.

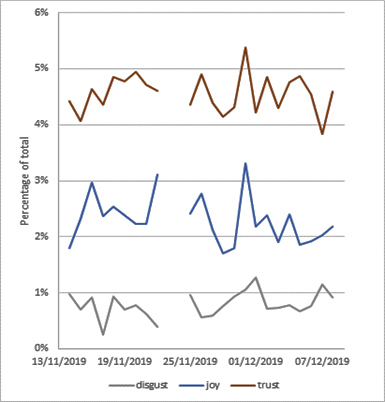

- We have developed a greater understanding of machine learning methodologies, including the utilisation of Natural Language Processing and Sentiment analyses to explore Twitter data on education over the last general election. An example of the type of sentiment analysis that is possible can be seen below. It tracks the level in each basic emotion from pre-election/manifesto publication, until just before the election.

Chart 1 – Sentiment analysis of Twitter data for a few days prior to 2019 general election

The investigation highlighted that the most prevalent emotion associated with tweets that mentioned education was ‘trust’. ‘Joy’ seemed to be less prevalent, and ‘disgust’ was the least present. There was some fluctuation in the daily averages of the different emotions across the dates. Nevertheless, the three emotions shown seemed to remain constant. No trend was noticeable except for a possible increase in ‘disgust’ in the second half of the dates.

Evaluation Approaches - Outcome Harvesting

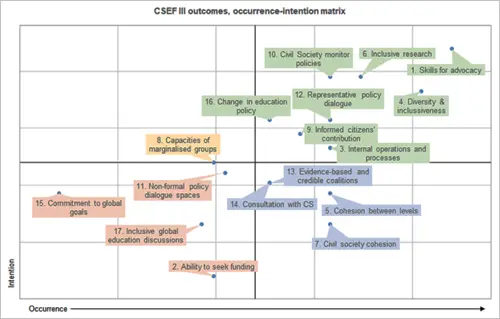

- In our international work, we are using innovative approaches, such as Outcome Harvesting, to evaluate complex, global education initiatives. Our evaluation of the Civil Society Education Fund used Outcome Harvesting to understand the intended and unintended outcomes of a programme that supported citizen engagement in education sector policy across four continents. An example of this work can be seen in the chart below. This is an example of outcome harvesting that goes beyond documentary analysis by creating a process for the triangulation of outcomes, adding to the validity of the findings.

Chart 2 – Outcome Harvesting and the association between intention and occurrence.

The above chart identifies, in green, outcomes which were mostly reported as achieved and intended while outcomes in blue were mostly reported as achieved although not explicitly in the coalition’s goals. Outcomes in yellow were targeted but not always achieved, and outcomes in orange correspond to outcomes that were more challenging to achieve and ‘less important’ to coalitions (i.e. not targeted).

Data scraping

- We have undertaken data scraping methods as a way of harvesting data from published documents to produce more immediate results and visualisations. This technique was employed as a recent NFER blog on teacher applications. The methodology allows researchers to access a wider range of data sources more easily and has the potential for quicker and/or greater insight.

Participatory approaches

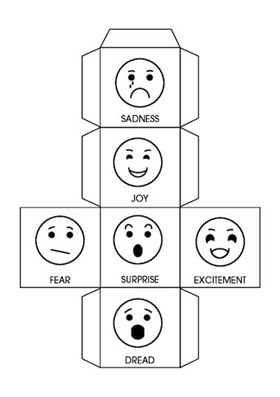

- We used new participatory approaches as part of our evaluation of a girls’ education programme in Sierra Leone. The programme targeted marginalised girls and children with disabilities. We adapted gender-sensitive and inclusive participatory tools to ensure the voices of the most vulnerable people were reflected in the evaluation. An example of the methods can be seen below:

The Feelings Dice method was adapted based on our experience working with children (including those with disabilities) to better understand and explore pupil experiences in school.

Multi-stage and computer adaptive test

- Adaptive and multi-stage testing is of increasing interest to some stakeholders. NFER has supported multi-stage testing in Australia and has run an adaptive simulation to illustrate the increased precision of results across the ability spectrum. While more questions need to be developed for these assessments, they adapt to the ability level of the child, which can result in a better user experience of the assessment and the additional benefit of potential time savings for the teacher. (Computer) Adaptive Testing, sometimes referred to as personalised testing, is a topic of growing interest, especially given the transitions made in Scotland, Wales and Australia to various forms of adaptive assessment. Our Head of Assessment Services, Angela Hopkins, recently wrote a blog on the issue which took an objective look at the benefits and challenges of an adaptive testing model.

Non-cognitive assessments

- NFER has led a project for the Duke of Edinburgh award scheme. We developed and delivered non-cognitive assessments looking at the impact of the scheme on participants’ wellbeing. We also conducted secondary analysis of PISA 2018 data exploring life satisfaction and the wellbeing of pupils in England, Wales and Northern Ireland. In addition, we have an internal database on life skill measurement tools which has increased our ability to produce and evaluate non-traditional assessments.

Other Activities

-

We have developed a tailored approach to conducting applied political economy analysis (PEA) of education systems. It generates strategic insights into how local education systems operate within their political contexts and how they influence and are influenced by social, economic, and political aspects.

Our approach is built on the UK’s The Policy Practice’s political economy analysis framework for International Development practitioners. Within that broad framework, our research team incorporated education-specific concepts and research methods to analyse education issues in international settings. We then tested the approach by studying the political economy of secondary school inspections and improvements in Uganda.

Our PEA approach provides local and international education stakeholders with an in-depth understanding of, and recommendations regarding, the enablers and barriers to education interventions, policies, and practice.